The exponential function y = ex (red) and the corresponding Taylor polynomial of degree four (dashed green) around the origin.

| Part of a series of articles about | ||||

| Calculus | ||||

|---|---|---|---|---|

| ||||

| ||||

| ||||

| ||||

|

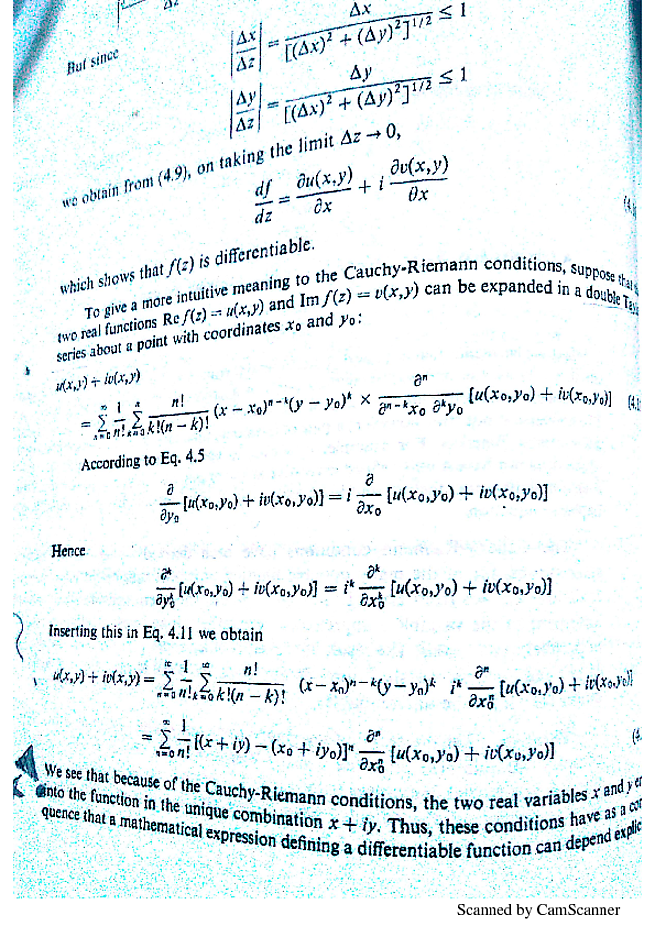

In calculus, Taylor's theorem gives an approximation of a k-times differentiablefunction around a given point by a k-th order Taylor polynomial. For analytic functions the Taylor polynomials at a given point are finite-order truncations of its Taylor series, which completely determines the function in some neighborhood of the point. It can be thought of as the extension of linear approximation to higher order polynomials, and in the case of k equals 2 is often referred to as a quadratic approximation.[1] The exact content of 'Taylor's theorem' is not universally agreed upon. Indeed, there are several versions of it applicable in different situations, and some of them contain explicit estimates on the approximation error of the function by its Taylor polynomial.

Taylor's theorem is named after the mathematician Brook Taylor, who stated a version of it in 1712. Yet an explicit expression of the error was not provided until much later on by Joseph-Louis Lagrange. An earlier version of the result was already mentioned in 1671 by James Gregory.[2]

Taylor's theorem is taught in introductory-level calculus courses and is one of the central elementary tools in mathematical analysis. Within pure mathematics it is the starting point of more advanced asymptotic analysis and is commonly used in more applied fields of numerics, as well as in mathematical physics. Taylor's theorem also generalizes to multivariate and vector valued functions on any dimensionsn and m. This generalization of Taylor's theorem is the basis for the definition of so-called jets, which appear in differential geometry and partial differential equations.

- 2Taylor's theorem in one real variable

- 3Relationship to analyticity

- 4Generalizations of Taylor's theorem

- 5Proofs

Motivation[edit]

Graph of f(x) = ex (blue) with its linear approximationP1(x) = 1 + x (red) at a = 0.

If a real-valued functionf is differentiable at the point a then it has a linear approximation at the point a. This means that there exists a function h1 such that

Here

is the linear approximation of f at the point a. The graph of y = P1(x) is the tangent line to the graph of f at x = a. The error in the approximation is

Note that this goes to zero a little bit faster than x − a as x tends to a, given the limiting behavior of h1.

Graph of f(x) = ex (blue) with its quadratic approximation P2(x) = 1 + x + x2/2 (red) at a = 0. Note the improvement in the approximation.

If we wanted a better approximation to f, we might instead try a quadratic polynomial instead of a linear function. Instead of just matching one derivative of f at a, we can match two derivatives, thus producing a polynomial that has the same slope and concavity as f at a. The quadratic polynomial in question is

Taylor's theorem ensures that the quadratic approximation is, in a sufficiently small neighborhood of the point a, a better approximation than the linear approximation. Specifically,

Here the error in the approximation is

which, given the limiting behavior of , goes to zero faster than as x tends to a.

Approximation of f(x) = 1/(1 + x2) (blue) by its Taylor polynomials Pk of order k = 1, .., 16 centered at x = 0 (red) and x = 1 (green). The approximations do not improve at all outside (−1, 1) and (1 − √2, 1 + √2) respectively.

Similarly, we might get still better approximations to f if we use polynomials of higher degree, since then we can match even more derivatives with f Dream car racing 3. at the selected base point.

In general, the error in approximating a function by a polynomial of degree k will go to zero a little bit faster than (x − a)k as x tends to a. But this might not always be the case: it is also possible that increasing the degree of the approximating polynomial does not increase the quality of approximation at all even if the function f to be approximated is infinitely many times differentiable. An example of this behavior is given below, and it is related to the fact that unlike analytic functions, more general functions are not (locally) determined by the values of their derivatives at a single point.

Taylor's theorem is of asymptotic nature: it only tells us that the error Rk in an approximation by a k-th order Taylor polynomial Pk tends to zero faster than any nonzero k-th degree polynomial as x → a. It does not tell us how large the error is in any concrete neighborhood of the center of expansion, but for this purpose there are explicit formulae for the remainder term (given below) which are valid under some additional regularity assumptions on f. These enhanced versions of Taylor's theorem typically lead to uniform estimates for the approximation error in a small neighborhood of the center of expansion, but the estimates do not necessarily hold for neighborhoods which are too large, even if the function f is analytic. In that situation one may have to select several Taylor polynomials with different centers of expansion to have reliable Taylor-approximations of the original function (see animation on the right.)

There are several things we might do with the remainder term:

- Estimate the error in using a polynomial Pk(x) of degree k to estimate f(x) on a given interval (a – r, a + r). (The interval and the degree k are fixed; we want to find the error.)

- Find the smallest degree k for which the polynomial Pk(x) approximates f(x) to within a given error (or tolerance) on a given interval (a − r, a + r) . (The interval and the error are fixed; we want to find the degree.)

- Find the largest interval (a − r, a + r) on which Pk(x) approximates f(x) to within a given error ('tolerance'). (The degree and the error are fixed; we want to find the interval.)

Taylor's theorem in one real variable[edit]

Statement of the theorem[edit]

The precise statement of the most basic version of Taylor's theorem is as follows:

Taylor's theorem.[3][4][5] Let k ≥ 1 be an integer and let the functionf : R → R be k times differentiable at the point a ∈ R. Then there exists a function hk : R → R such that

. This is called the Peano form of the remainder.

The polynomial appearing in Taylor's theorem is the k-th order Taylor polynomial

of the function f at the point a. The Taylor polynomial is the unique 'asymptotic best fit' polynomial in the sense that if there exists a function hk : R → R and a k-th order polynomial p such that

then p = Pk. Taylor's theorem describes the asymptotic behavior of the remainder term

which is the approximation error when approximating f with its Taylor polynomial. Using the little-o notation, the statement in Taylor's theorem reads as

Explicit formulas for the remainder[edit]

Under stronger regularity assumptions on f there are several precise formulae for the remainder term Rk of the Taylor polynomial, the most common ones being the following.

Mean-value forms of the remainder. Let f : R → R be k + 1 times differentiable on the open interval with f(k)continuous on the closed interval between a and x.[6] Then

for some real number ξL between a and x. This is the Lagrange form[7] of the remainder. Similarly,

for some real number ξC between a and x. This is the Cauchy form[8] of the remainder.

These refinements of Taylor's theorem are usually proved using the mean value theorem, whence the name. Also other similar expressions can be found. For example, if G(t) is continuous on the closed interval and differentiable with a non-vanishing derivative on the open interval between a and x, then

for some number ξ between a and x. This version covers the Lagrange and Cauchy forms of the remainder as special cases, and is proved below using Cauchy's mean value theorem.

The statement for the integral form of the remainder is more advanced than the previous ones, and requires understanding of Lebesgue integration theory for the full generality. However, it holds also in the sense of Riemann integral provided the (k + 1)th derivative of f is continuous on the closed interval [a,x].

Integral form of the remainder.[9] Let f(k) be absolutely continuous on the closed interval between a and x. Then

Due to absolute continuity of f(k) on the closed interval between a and x, its derivative f(k+1) exists as an L1-function, and the result can be proven by a formal calculation using fundamental theorem of calculus and integration by parts.

Estimates for the remainder[edit]

It is often useful in practice to be able to estimate the remainder term appearing in the Taylor approximation, rather than having an exact formula for it. Suppose that f is (k + 1)-times continuously differentiable in an interval I containing a. Suppose that there are real constants q and Q such that

throughout I. Then the remainder term satisfies the inequality[10]

if x > a, and a similar estimate if x < a. This is a simple consequence of the Lagrange form of the remainder. In particular, if

on an interval I = (a − r,a + r) with some , then

for all x∈(a − r,a + r). The second inequality is called a uniform estimate, because it holds uniformly for all x on the interval (a − r,a + r).

Example[edit]

Approximation of ex (blue) by its Taylor polynomials Pk of order k = 1,..,7 centered at x = 0 (red).

Suppose that we wish to approximate the function f(x) = ex on the interval [−1,1] while ensuring that the error in the approximation is no more than 10−5. In this example we pretend that we only know the following properties of the exponential function:

From these properties it follows that f(k)(x) = ex for all k, and in particular, f(k)(0) = 1. Hence the k-th order Taylor polynomial of f at 0 and its remainder term in the Lagrange form are given by

where ξ is some number between 0 and x. Since ex is increasing by (*), we can simply use ex ≤ 1 for x ∈ [−1, 0] to estimate the remainder on the subinterval [−1, 0]. To obtain an upper bound for the remainder on [0,1], we use the property eξ<ex for 0<ξ<x to estimate

using the second order Taylor expansion. Then we solve for ex to deduce that

simply by maximizing the numerator and minimizing the denominator. Combining these estimates for ex we see that

so the required precision is certainly reached, when

(See factorial or compute by hand the values 9!=362 880 and 10!=3 628 800.) As a conclusion, Taylor's theorem leads to the approximation

For instance, this approximation provides a decimal expressione ≈ 2.71828, correct up to five decimal places.

Relationship to analyticity[edit]

Taylor expansions of real analytic functions[edit]

Let I ⊂ R be an open interval. By definition, a function f : I → R is real analytic if it is locally defined by a convergent power series. This means that for every a ∈ I there exists some r > 0 and a sequence of coefficients ck ∈ R such that (a − r, a + r) ⊂ I and

In general, the radius of convergence of a power series can be computed from the Cauchy–Hadamard formula

This result is based on comparison with a geometric series, and the same method shows that if the power series based on a converges for some b ∈ R, it must converge uniformly on the closed interval[a − rb, a + rb], where rb = |b − a|. Here only the convergence of the power series is considered, and it might well be that (a − R,a + R) extends beyond the domain I of f.

The Taylor polynomials of the real analytic function f at a are simply the finite truncations

of its locally defining power series, and the corresponding remainder terms are locally given by the analytic functions

Here the functions

are also analytic, since their defining power series have the same radius of convergence as the original series. Assuming that [a − r, a + r] ⊂ I and r < R, all these series converge uniformly on (a − r, a + r). Naturally, in the case of analytic functions one can estimate the remainder term Rk(x) by the tail of the sequence of the derivatives f′(a) at the center of the expansion, but using complex analysis also another possibility arises, which is described below.

Taylor's theorem and convergence of Taylor series[edit]

The Taylor series of f will converge in some interval, given that all its derivatives are bounded over it and do not grow too fast as k goes to infinity. (However, it is not always the case that the Taylor series of f, if it converges, will in fact converge to f, as explained below; f is then said to be non-analytic.)

One might think of the Taylor series

of an infinitely many times differentiable function f : R → R as its 'infinite order Taylor polynomial' at a. Now the estimates for the remainder imply that if, for any r, the derivatives of f are known to be bounded over (a − r,a + r), then for any order k and for any r > 0 there exists a constant Mk,r > 0 such that

for every x ∈ (a − r,a + r). Sometimes the constants Mk,r can be chosen in such way that Mk,r is bounded above, for fixed r and all k. Then the Taylor series of fconverges uniformly to some analytic function

(One also gets convergence even if Mk,r is not bounded above as long as it grows slowly enough.)

However, even though Tf is always analytic, the case may be that f is not. That is to say, it may well be that an infinitely many times differentiable function f has a Taylor series at a which converges on some open neighborhood of a, but the limit function Tf is different from f. An important example of this phenomenon is provided by the non-analytic smooth function known as a flat function:

Using the chain rule one can show by mathematical induction that for any order k,

for some polynomial pk of degree 2(k − 1). The function tends to zero faster than any polynomial as x → 0, so f is infinitely many times differentiable and f(k)(0) = 0 for every positive integer k. Now the estimates for the remainder for the Taylor polynomials show that the Taylor series of f converges uniformly to the zero function on the whole real axis. Nothing is wrong in here:

- The Taylor series of f converges uniformly to the zero function Tf(x) = 0.

- The zero function is analytic and every coefficient in its Taylor series is zero.

- The function f is infinitely many times differentiable, but not analytic.

- For any k ∈ N and r > 0 there exists Mk,r > 0 such that the remainder term for the k-th order Taylor polynomial of f satisfies (*), and is bounded above, for all k and fixed r.

Taylor's theorem in complex analysis[edit]

Taylor's theorem generalizes to functions f : C → C which are complex differentiable in an open subset U ⊂ C of the complex plane. However, its usefulness is dwarfed by other general theorems in complex analysis. Namely, stronger versions of related results can be deduced for complex differentiable functions f : U → C using Cauchy's integral formula as follows.

Let r > 0 such that the closed diskB(z, r) ∪ S(z, r) is contained in U. Then Cauchy's integral formula with a positive parametrization γ(t)=z + reit of the circle S(z, r) with t ∈ [0, 2π] gives

Here all the integrands are continuous on the circleS(z, r), which justifies differentiation under the integral sign. In particular, if f is once complex differentiable on the open set U, then it is actually infinitely many times complex differentiable on U. One also obtains the Cauchy's estimates[11]

for any z ∈ U and r > 0 such that B(z, r) ∪ S(c, r) ⊂ U. These estimates imply that the complexTaylor series

of f converges uniformly on any open diskB(c, r) ⊂ U with S(c, r) ⊂ U into some function Tf. Furthermore, using the contour integral formulae for the derivatives f(k)(c),

so any complex differentiable function f in an open set U ⊂ C is in fact complex analytic. All that is said for real analytic functions here holds also for complex analytic functions with the open interval I replaced by an open subset U ∈ C and a-centered intervals (a − r, a + r) replaced by c-centered disks B(c, r). In particular, the Taylor expansion holds in the form

where the remainder term Rk is complex analytic. Methods of complex analysis provide some powerful results regarding Taylor expansions. For example, using Cauchy's integral formula for any positively oriented Jordan curveγ which parametrizes the boundary ∂W ⊂ U of a region W ⊂ U, one obtains expressions for the derivatives f(j)(c) as above, and modifying slightly the computation for Tf(z) = f(z), one arrives at the exact formula

The important feature here is that the quality of the approximation by a Taylor polynomial on the region W ⊂ U is dominated by the values of the function f itself on the boundary ∂W ⊂ U. Similarly, applying Cauchy's estimates to the series expression for the remainder, one obtains the uniform estimates

Example[edit]

Complex plot of f(z) = 1/(1 + z2). Modulus is shown by elevation and argument by coloring: cyan=0, blue = π/3, violet = 2π/3, red = π, yellow=4π/3, green=5π/3.

The function

is real analytic, that is, locally determined by its Taylor series. This function was plotted above to illustrate the fact that some elementary functions cannot be approximated by Taylor polynomials in neighborhoods of the center of expansion which are too large. This kind of behavior is easily understood in the framework of complex analysis. Namely, the function f extends into a meromorphic function

on the compactified complex plane. It has simple poles at z = i and z = −i, and it is analytic elsewhere. Now its Taylor series centered at z0 converges on any disc B(z0, r) with r < |z − z0|, where the same Taylor series converges at z ∈ C. Therefore, Taylor series of f centered at 0 converges on B(0, 1) and it does not converge for any z ∈ C with |z| > 1 due to the poles at i and −i. For the same reason the Taylor series of f centered at 1 converges on B(1, √2) and does not converge for any z ∈ C with |z − 1| > √2.

Generalizations of Taylor's theorem[edit]

Higher-order differentiability[edit]

A function f: Rn → R is differentiable at a ∈ Rnif and only if there exists a linear functionalL : Rn → R and a function h : Rn → R such that

If this is the case, then L = df(a) is the (uniquely defined) differential of f at the point a. Furthermore, then the partial derivatives of f exist at a and the differential of f at a is given by

Introduce the multi-index notation

for α ∈ Nn and x ∈ Rn. If all the k-th order partial derivatives of f : Rn → R are continuous at a ∈ Rn, then by Clairaut's theorem, one can change the order of mixed derivatives at a, so the notation

for the higher order partial derivatives is justified in this situation. The same is true if all the (k − 1)-th order partial derivatives of f exist in some neighborhood of a and are differentiable at a.[12] Then we say that f is ktimes differentiable at the point a.

Taylor's theorem for multivariate functions[edit]

Multivariate version of Taylor's theorem.[13] Let f : Rn → R be a k times differentiable function at the point a∈Rn. Then there exists hα : Rn→R such that

If the function f : Rn → R is k + 1 times continuously differentiable in the closed ballB, then one can derive an exact formula for the remainder in terms of (k+1)-th order partial derivatives of f in this neighborhood. Namely,

In this case, due to the continuity of (k+1)-th order partial derivatives in the compact setB, one immediately obtains the uniform estimates

Example in two dimensions[edit]

For example, the third-order Taylor polynomial of a smooth function f: R2 → R is, denoting x − a = v,

Proofs[edit]

Proof for Taylor's theorem in one real variable[edit]

Let[14]

where, as in the statement of Taylor's theorem,

It is sufficient to show that

The proof here is based on repeated application of L'Hôpital's rule. Note that, for each j = 0,1,..,k−1, . Hence each of the first k−1 derivatives of the numerator in vanishes at , and the same is true of the denominator. Also, since the condition that the function f be k times differentiable at a point requires differentiability up to order k−1 in a neighborhood of said point (this is true, because differentiability requires a function to be defined in a whole neighborhood of a point), the numerator and its k − 2 derivatives are differentiable in a neighborhood of a. Clearly, the denominator also satisfies said condition, and additionally, doesn't vanish unless x=a, therefore all conditions necessary for L'Hopital's rule are fulfilled, and its use is justified. So

where the second to last equality follows by the definition of the derivative at x = a.

Derivation for the mean value forms of the remainder[edit]

Let G be any real-valued function, continuous on the closed interval between a and x and differentiable with a non-vanishing derivative on the open interval between a and x, and define

For . Then, by Cauchy's mean value theorem,

for some ξ on the open interval between a and x. Note that here the numerator F(x) − F(a) = Rk(x) is exactly the remainder of the Taylor polynomial for f(x). Compute

plug it into (*) and rearrange terms to find that

This is the form of the remainder term mentioned after the actual statement of Taylor's theorem with remainder in the mean value form.The Lagrange form of the remainder is found by choosing and the Cauchy form by choosing .

Remark. Using this method one can also recover the integral form of the remainder by choosing

but the requirements for f needed for the use of mean value theorem are too strong, if one aims to prove the claim in the case that f(k) is only absolutely continuous. However, if one uses Riemann integral instead of Lebesgue integral, the assumptions cannot be weakened.

Derivation for the integral form of the remainder[edit]

Due to absolute continuity of f(k) on the closed interval between a and x its derivative f(k+1) exists as an L1-function, and we can use fundamental theorem of calculus and integration by parts. This same proof applies for the Riemann integral assuming that f(k) is continuous on the closed interval and differentiable on the open interval between a and x, and this leads to the same result than using the mean value theorem.

The fundamental theorem of calculus states that

Now we can integrate by parts and use the fundamental theorem of calculus again to see that

which is exactly Taylor's theorem with remainder in the integral form in the case k=1.The general statement is proved using induction. Suppose that

Integrating the remainder term by parts we arrive at

Substituting this into the formula in (*) shows that if it holds for the value k, it must also hold for the value k + 1.Therefore, since it holds for k = 1, it must hold for every positive integer k.

Derivation for the Cauchy form of the remainder[edit]

To the integral form of the remainder, we can apply the mean value theorem for integral.

,where

So, The Cauchy form of the remainder is hold.

Derivation for the remainder of multivariate Taylor polynomials[edit]

We prove the special case, where f : Rn → R has continuous partial derivatives up to the order k+1 in some closed ball B with center a. The strategy of the proof is to apply the one-variable case of Taylor's theorem to the restriction of f to the line segment adjoining x and a.[15] Parametrize the line segment between a and x by u(t) = a + t(x − a). We apply the one-variable version of Taylor's theorem to the function g(t) = f(u(t)):

Applying the chain rule for several variables gives

where is the multinomial coefficient. Since , we get

See also[edit]

Footnotes[edit]

- ^ (2013). 'Linear and quadratic approximation' Retrieved December 6, 2018

- ^Kline 1972, p. 442, 464.

- ^Genocchi, Angelo; Peano, Giuseppe (1884), Calcolo differenziale e principii di calcolo integrale, (N. 67, pp. XVII–XIX): Fratelli Bocca ed.

- ^Spivak, Michael (1994), Calculus (3rd ed.), Houston, TX: Publish or Perish, p. 383, ISBN978-0-914098-89-8

- ^Hazewinkel, Michiel, ed. (2001) [1994], 'Taylor formula', Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers, ISBN978-1-55608-010-4

- ^The hypothesis of f(k) being continuous on the closed interval between a and x is not redundant. Although f being k + 1 times differentiable on the open interval between a and x does imply that f(k) is continuous on the open interval between a and x, it does not imply that f(k) is continuous on the closed interval between a and x, i.e. it does not imply that f(k) is continuous at the endpoints of that interval. Consider, for example, the functionf : [0,1] → R defined to equal on and with . This is not continuous at 0, but is continuous on . Moreover, one can show that this function has an antiderivative. Therefore that antiderivative is differentiable on , its derivative (the function f) is continuous on the open interval, but its derivativef is notcontinuous on the closed interval. So the theorem would not apply in this case.

- ^Kline 1998, §20.3; Apostol 1967, §7.7.

- ^Apostol 1967, §7.7.

- ^Apostol 1967, §7.5.

- ^Apostol 1967, §7.6

- ^Rudin 1987, §10.26

- ^This follows from iterated application of the theorem that if the partial derivatives of a function f exist in a neighborhood of a and are continuous at a, then the function is differentiable at a. See, for instance, Apostol 1974, Theorem 12.11.

- ^Königsberger Analysis 2, p. 64 ff.

- ^Stromberg 1981

- ^Hörmander 1976, pp. 12–13

References[edit]

- Apostol, Tom (1967), Calculus, Wiley, ISBN0-471-00005-1.

- Apostol, Tom (1974), Mathematical analysis, Addison–Wesley.

- Bartle, Robert G.; Sherbert, Donald R. (2011), Introduction to Real Analysis (4th ed.), Wiley, ISBN978-0-471-43331-6.

- Hörmander, L. (1976), Linear Partial Differential Operators, Volume 1, Springer, ISBN978-3-540-00662-6.

- Kline, Morris (1972), Mathematical thought from ancient to modern times, Volume 2, Oxford University Press.

- Kline, Morris (1998), Calculus: An Intuitive and Physical Approach, Dover, ISBN0-486-40453-6.

- Pedrick, George (1994), A First Course in Analysis, Springer, ISBN0-387-94108-8.

- Stromberg, Karl (1981), Introduction to classical real analysis, Wadsworth, ISBN978-0-534-98012-2.

- Rudin, Walter (1987), Real and complex analysis (3rd ed.), McGraw-Hill, ISBN0-07-054234-1.

- Tao, Terence (2014), Analysis, Volume I (3rd ed.), Hindustan Book Agency, ISBN978-93-80250-64-9.

External links[edit]

- Taylor's theorem at ProofWiki

- Taylor Series Approximation to Cosine at cut-the-knot

- Trigonometric Taylor Expansion interactive demonstrative applet

- Taylor Series Revisited at Holistic Numerical Methods Institute

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Taylor%27s_theorem&oldid=901380037'

As the degree of the Taylor polynomial rises, it approaches the correct function. This image shows sin x and its Taylor approximations, polynomials of degree 1, 3, 5, 7, 9, 11 and 13.

| Part of a series of articles about | ||||

| Calculus | ||||

|---|---|---|---|---|

| ||||

| ||||

| ||||

| ||||

|

In mathematics, a Taylor series is a representation of a function as an infinite sum of terms that are calculated from the values of the function's derivatives at a single point.[1][2][3]

In the West, the subject was formulated by the Scottish mathematician James Gregory and formally introduced by the English mathematician Brook Taylor in 1715. If the Taylor series is centered at zero, then that series is also called a Maclaurin series, after the Scottish mathematician Colin Maclaurin, who made extensive use of this special case of Taylor series in the 18th century.

A function can be approximated by using a finite number of terms of its Taylor series. Taylor's theorem gives quantitative estimates on the error introduced by the use of such an approximation. The polynomial formed by taking some initial terms of the Taylor series is called a Taylor polynomial. The Taylor series of a function is the limit of that function's Taylor polynomials as the degree increases, provided that the limit exists. A function may not be equal to its Taylor series, even if its Taylor series converges at every point. A function that is equal to its Taylor series in an open interval (or a disc in the complex plane) is known as an analytic function in that interval.

- 5Approximation error and convergence

- 6List of Maclaurin series of some common functions

- 7Calculation of Taylor series

- 9Taylor series in several variables

Definition[edit]

The Taylor series of a real or complex-valued functionf (x) that is infinitely differentiable at a real or complex numbera is the power series

where n! denotes the factorial of n and f(n)(a) denotes the nth derivative of f evaluated at the point a. In the more compact sigma notation, this can be written as

The derivative of order zero of f is defined to be f itself and (x − a)0 and 0! are both defined to be 1. When a = 0, the series is also called a Maclaurin series.[4]

Examples[edit]

The Taylor series for any polynomial is the polynomial itself.

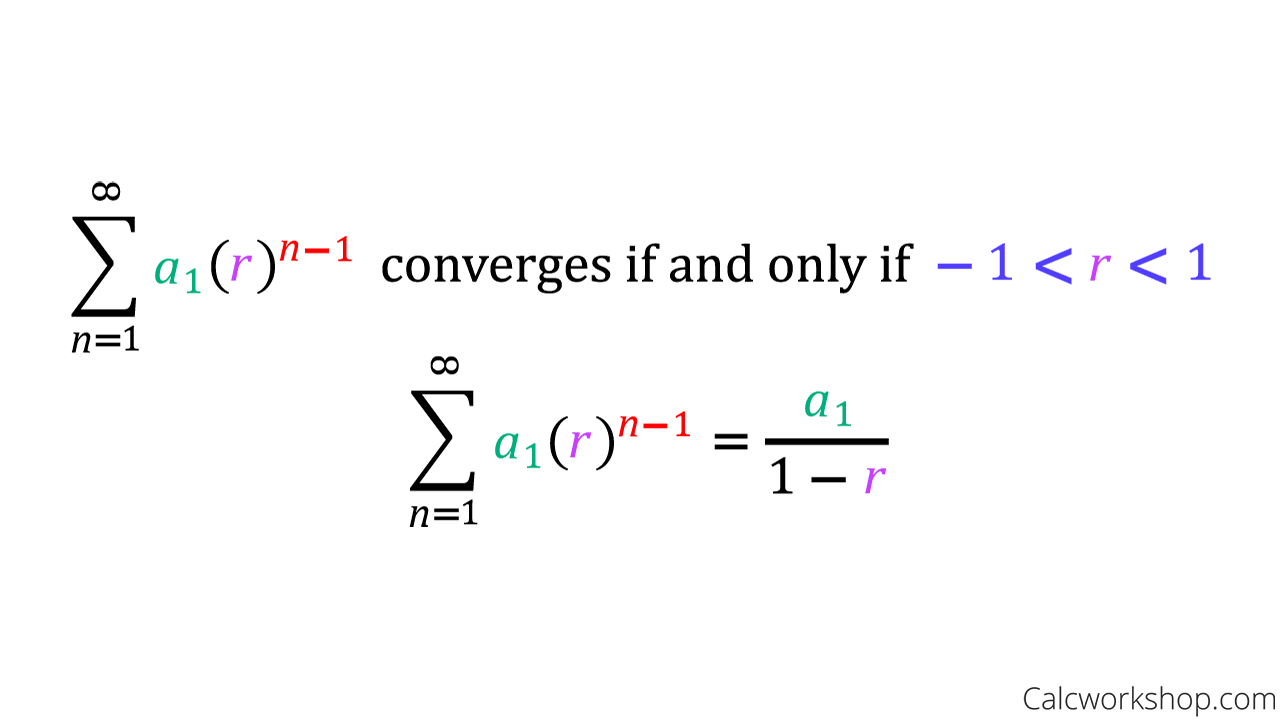

The Maclaurin series for 1/1 − x is the geometric series

so the Taylor series for 1/x at a = 1 is

By integrating the above Maclaurin series, we find the Maclaurin series for log(1 − x), where log denotes the natural logarithm:

and the corresponding Taylor series for log x at a = 1 is

and more generally, the corresponding Taylor series for log x at some a = x0 is:

The Taylor series for the exponential functionex at a = 0 is

The above expansion holds because the derivative of ex with respect to x is also ex and e0 equals 1. This leaves the terms (x − 0)n in the numerator and n! in the denominator for each term in the infinite sum.

History[edit]

The Greek philosopher Zeno considered the problem of summing an infinite series to achieve a finite result, but rejected it as an impossibility[5]; the result was Zeno's paradox. Later, Aristotle proposed a philosophical resolution of the paradox, but the mathematical content was apparently unresolved until taken up by Archimedes, as it had been prior to Aristotle by the Presocratic Atomist Democritus. It was through Archimedes's method of exhaustion that an infinite number of progressive subdivisions could be performed to achieve a finite result.[6]Liu Hui independently employed a similar method a few centuries later.[7]

In the 14th century, the earliest examples of the use of Taylor series and closely related methods were given by Madhava of Sangamagrama.[1][2] Though no record of his work survives, writings of later Indian mathematicians suggest that he found a number of special cases of the Taylor series, including those for the trigonometric functions of sine, cosine, tangent, and arctangent. The Kerala School of Astronomy and Mathematics further expanded his works with various series expansions and rational approximations until the 16th century.

In the 17th century, James Gregory also worked in this area and published several Maclaurin series. It was not until 1715 however that a general method for constructing these series for all functions for which they exist was finally provided by Brook Taylor,[8] after whom the series are now named.

The Maclaurin series was named after Colin Maclaurin, a professor in Edinburgh, who published the special case of the Taylor result in the 18th century.

Analytic functions[edit]

The function e(−1/x2) is not analytic at x = 0: the Taylor series is identically 0, although the function is not.

If f (x) is given by a convergent power series in an open disc (or interval in the real line) centred at b in the complex plane, it is said to be analytic in this disc. Thus for x in this disc, f is given by a convergent power series

Differentiating by x the above formula n times, then setting x = b gives:

and so the power series expansion agrees with the Taylor series. Thus a function is analytic in an open disc centred at b if and only if its Taylor series converges to the value of the function at each point of the disc.

If f (x) is equal to its Taylor series for all x in the complex plane, it is called entire. The polynomials, exponential functionex, and the trigonometric functions sine and cosine, are examples of entire functions. Examples of functions that are not entire include the square root, the logarithm, the trigonometric function tangent, and its inverse, arctan. For these functions the Taylor series do not converge if x is far from b. That is, the Taylor series diverges at x if the distance between x and b is larger than the radius of convergence. The Taylor series can be used to calculate the value of an entire function at every point, if the value of the function, and of all of its derivatives, are known at a single point.

Uses of the Taylor series for analytic functions include:

- The partial sums (the Taylor polynomials) of the series can be used as approximations of the function. These approximations are good if sufficiently many terms are included.

- Differentiation and integration of power series can be performed term by term and is hence particularly easy.

- An analytic function is uniquely extended to a holomorphic function on an open disk in the complex plane. This makes the machinery of complex analysis available.

- The (truncated) series can be used to compute function values numerically, (often by recasting the polynomial into the Chebyshev form and evaluating it with the Clenshaw algorithm).

- Algebraic operations can be done readily on the power series representation; for instance, Euler's formula follows from Taylor series expansions for trigonometric and exponential functions. This result is of fundamental importance in such fields as harmonic analysis.

- Approximations using the first few terms of a Taylor series can make otherwise unsolvable problems possible for a restricted domain; this approach is often used in physics.

Approximation error and convergence[edit]

The sine function (blue) is closely approximated by its Taylor polynomial of degree 7 (pink) for a full period centered at the origin.

The Taylor polynomials for log(1 + x) only provide accurate approximations in the range −1 < x ≤ 1. For x > 1, Taylor polynomials of higher degree provide worse approximations.

The Taylor approximations for log(1 + x) (black). For x > 1, the approximations diverge.

Pictured on the right is an accurate approximation of sin x around the point x = 0. The pink curve is a polynomial of degree seven:

The error in this approximation is no more than |x|9/9!. In particular, for −1 < x < 1, the error is less than 0.000003.

In contrast, also shown is a picture of the natural logarithm function log(1 + x) and some of its Taylor polynomials around a = 0. These approximations converge to the function only in the region −1 < x ≤ 1; outside of this region the higher-degree Taylor polynomials are worse approximations for the function. This is similar to Runge's phenomenon.[citation needed]

The error incurred in approximating a function by its nth-degree Taylor polynomial is called the remainder or residual and is denoted by the function Rn(x). Taylor's theorem can be used to obtain a bound on the size of the remainder.

In general, Taylor series need not be convergent at all. And in fact the set of functions with a convergent Taylor series is a meager set in the Fréchet space of smooth functions. And even if the Taylor series of a function f does converge, its limit need not in general be equal to the value of the function f (x). For example, the function

is infinitely differentiable at x = 0, and has all derivatives zero there. Consequently, the Taylor series of f (x) about x = 0 is identically zero. However, f (x) is not the zero function, so does not equal its Taylor series around the origin. Thus, f (x) is an example of a non-analytic smooth function.

In real analysis, this example shows that there are infinitely differentiable functionsf (x) whose Taylor series are not equal to f (x) even if they converge. By contrast, the holomorphic functions studied in complex analysis always possess a convergent Taylor series, and even the Taylor series of meromorphic functions, which might have singularities, never converge to a value different from the function itself. The complex function e−1/z2, however, does not approach 0 when z approaches 0 along the imaginary axis, so it is not continuous in the complex plane and its Taylor series is undefined at 0.

More generally, every sequence of real or complex numbers can appear as coefficients in the Taylor series of an infinitely differentiable function defined on the real line, a consequence of Borel's lemma. As a result, the radius of convergence of a Taylor series can be zero. There are even infinitely differentiable functions defined on the real line whose Taylor series have a radius of convergence 0 everywhere.[9]

A function cannot be written as a Taylor series centred at a singularity; in these cases, one can often still achieve a series expansion if one allows also negative powers of the variable x; see Laurent series. For example, f (x) = e−1/x2 can be written as a Laurent series.

Generalization[edit]

There is, however, a generalization[10][11] of the Taylor series that does converge to the value of the function itself for any boundedcontinuous function on (0,∞), using the calculus of finite differences. Specifically, one has the following theorem, due to Einar Hille, that for any t > 0,

Here Δn

h is the nth finite difference operator with step size h. The series is precisely the Taylor series, except that divided differences appear in place of differentiation: the series is formally similar to the Newton series. When the function f is analytic at a, the terms in the series converge to the terms of the Taylor series, and in this sense generalizes the usual Taylor series.

h is the nth finite difference operator with step size h. The series is precisely the Taylor series, except that divided differences appear in place of differentiation: the series is formally similar to the Newton series. When the function f is analytic at a, the terms in the series converge to the terms of the Taylor series, and in this sense generalizes the usual Taylor series.

In general, for any infinite sequence ai, the following power series identity holds:

So in particular,

The series on the right is the expectation value of f (a + X), where X is a Poisson-distributedrandom variable that takes the value jh with probability e−t/h·(t/h)j/j!. Hence,

The law of large numbers implies that the identity holds.[12]

List of Maclaurin series of some common functions[edit]

Several important Maclaurin series expansions follow.[13] All these expansions are valid for complex arguments x.

Exponential function[edit]

The exponential functionex (in blue), and the sum of the first n + 1 terms of its Taylor series at 0 (in red).

The exponential function (with base e) has Maclaurin series

- .

It converges for all x.

Natural logarithm[edit]

The natural logarithm (with base e) has Maclaurin series

They converge for . Also log(1-x) converges for x=-1 and log(1+x) converges for x=1.

Geometric series[edit]

The geometric series and its derivatives have Maclaurin series

All are convergent for . These are special cases of the binomial series given in the next section.

Binomial series[edit]

The binomial series is the power series

whose coefficients are the generalized binomial coefficients

(If n = 0, this product is an empty product and has value 1.) It converges for for any real or complex number α.

When α = −1, this is essentially the infinite geometric series mentioned in the previous section. The special cases α = 1/2 and α = −1/2 give the square root function and its inverse:

When only the linear term is retained, this simplifies to the binomial approximation.

Trigonometric functions[edit]

The usual trigonometric functions and their inverses have the following Maclaurin series:

All angles are expressed in radians. The numbers Bk appearing in the expansions of tan x are the Bernoulli numbers. The Ek in the expansion of sec x are Euler numbers.

Hyperbolic functions[edit]

The hyperbolic functions have Maclaurin series closely related to the series for the corresponding trigonometric functions:

The numbers Bk appearing in the series for tanh x are the Bernoulli numbers.

Calculation of Taylor series[edit]

Several methods exist for the calculation of Taylor series of a large number of functions. One can attempt to use the definition of the Taylor series, though this often requires generalizing the form of the coefficients according to a readily apparent pattern. Alternatively, one can use manipulations such as substitution, multiplication or division, addition or subtraction of standard Taylor series to construct the Taylor series of a function, by virtue of Taylor series being power series. In some cases, one can also derive the Taylor series by repeatedly applying integration by parts. Particularly convenient is the use of computer algebra systems to calculate Taylor series.

First example[edit]

In order to compute the 7th degree Maclaurin polynomial for the function

- ,

one may first rewrite the function as

- .

The Taylor series for the natural logarithm is (using the big O notation)

and for the cosine function

- .

The latter series expansion has a zero constant term, which enables us to substitute the second series into the first one and to easily omit terms of higher order than the 7th degree by using the big O notation:

Since the cosine is an even function, the coefficients for all the odd powers x, x3, x5, x7, .. have to be zero.

Second example[edit]

Suppose we want the Taylor series at 0 of the function

We have for the exponential function

and, as in the first example,

Assume the power series is

Then multiplication with the denominator and substitution of the series of the cosine yields

Collecting the terms up to fourth order yields

The values of can be found by comparison of coefficients with the top expression for , yielding:

Third example[edit]

Here we employ a method called 'indirect expansion' to expand the given function. This method uses the known Taylor expansion of the exponential function. In order to expand (1 + x)ex as a Taylor series in x, we use the known Taylor series of function ex:

Thus,

Taylor series as definitions[edit]

Classically, algebraic functions are defined by an algebraic equation, and transcendental functions (including those discussed above) are defined by some property that holds for them, such as a differential equation. For example, the exponential function is the function which is equal to its own derivative everywhere, and assumes the value 1 at the origin. However, one may equally well define an analytic function by its Taylor series.

Taylor series are used to define functions and 'operators' in diverse areas of mathematics. In particular, this is true in areas where the classical definitions of functions break down. For example, using Taylor series, one may extend analytic functions to sets of matrices and operators, such as the matrix exponential or matrix logarithm.

In other areas, such as formal analysis, it is more convenient to work directly with the power series themselves. Thus one may define a solution of a differential equation as a power series which, one hopes to prove, is the Taylor series of the desired solution.

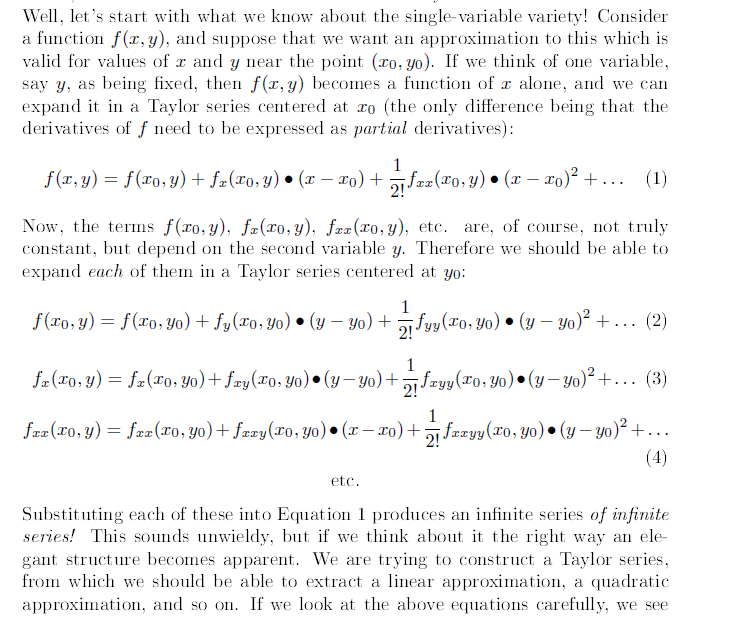

Taylor series in several variables[edit]

The Taylor series may also be generalized to functions of more than one variable with[14][15]

For example, for a function that depends on two variables, x and y, the Taylor series to second order about the point (a, b) is

where the subscripts denote the respective partial derivatives.

A second-order Taylor series expansion of a scalar-valued function of more than one variable can be written compactly as

where Df (a) is the gradient of f evaluated at x = a and D2f (a) is the Hessian matrix. Applying the multi-index notation the Taylor series for several variables becomes

which is to be understood as a still more abbreviated multi-index version of the first equation of this paragraph, with a full analogy to the single variable case.

Example[edit]

Second-order Taylor series approximation (in orange) of a function f (x,y) = ex log(1 + y) around the origin.

In order to compute a second-order Taylor series expansion around point (a, b) = (0, 0) of the function

one first computes all the necessary partial derivatives:

Evaluating these derivatives at the origin gives the Taylor coefficients

Substituting these values in to the general formula

produces

Since log(1 + y) is analytic in |y| < 1, we have

Comparison with Fourier series[edit]

The trigonometric Fourier series enables one to express a periodic function (or a function defined on a closed interval [a,b]) as an infinite sum of trigonometric functions (sines and cosines). In this sense, the Fourier series is analogous to Taylor series, since the latter allows one to express a function as an infinite sum of powers. Nevertheless, the two series differ from each other in several relevant issues:

Taylor Expansion Two Variables Mathematica

- The finite truncations of the Taylor series of f (x) about the point x = a are all exactly equal to f at a. In contrast, the Fourier series is computed by integrating over an entire interval, so there is generally no such point where all the finite truncations of the series are exact.

- The computation of Taylor series requires the knowledge of the function on an arbitrary small neighbourhood of a point, whereas the computation of the Fourier series requires knowing the function on its whole domain interval. In a certain sense one could say that the Taylor series is 'local' and the Fourier series is 'global'.

- The Taylor series is defined for a function which has infinitely many derivatives at a single point, whereas the Fourier series is defined for any integrable function. In particular, the function could be nowhere differentiable. (For example, f (x) could be a Weierstrass function.)

- The convergence of both series has very different properties. Even if the Taylor series has positive convergence radius, the resulting series may not coincide with the function; but if the function is analytic then the series converges pointwise to the function, and uniformly on every compact subset of the convergence interval. Concerning the Fourier series, if the function is square-integrable then the series converges in quadratic mean, but additional requirements are needed to ensure the pointwise or uniform convergence (for instance, if the function is periodic and of class C1 then the convergence is uniform).

- Finally, in practice one wants to approximate the function with a finite number of terms, say with a Taylor polynomial or a partial sum of the trigonometric series, respectively. In the case of the Taylor series the error is very small in a neighbourhood of the point where it is computed, while it may be very large at a distant point. In the case of the Fourier series the error is distributed along the domain of the function.

See also[edit]

Notes[edit]

- ^ ab'Neither Newton nor Leibniz – The Pre-History of Calculus and Celestial Mechanics in Medieval Kerala'(PDF). MAT 314. Canisius College. Archived(PDF) from the original on 2015-02-23. Retrieved 2006-07-09.

- ^ abS. G. Dani (2012). 'Ancient Indian Mathematics – A Conspectus'. Resonance. 17 (3): 236–246. doi:10.1007/s12045-012-0022-y.

- ^{{Ranjan Roy, The Discovery of the Series Formula for π by Leibniz, Gregory and Nilakantha, Mathematics MagazineVol. 63, No. 5 (Dec., 1990), pp. 291-306.}}

- ^Thomas & Finney 1996, §8.9

- ^Lindberg, David (2007). The Beginnings of Western Science (2nd ed.). University of Chicago Press. p. 33. ISBN978-0-226-48205-7.

- ^Kline, M. (1990). Mathematical Thought from Ancient to Modern Times. New York: Oxford University Press. pp. 35–37. ISBN0-19-506135-7.

- ^Boyer, C.; Merzbach, U. (1991). A History of Mathematics (Second revised ed.). John Wiley and Sons. pp. 202–203. ISBN0-471-09763-2.

- ^Taylor, Brook (1715). Methodus Incrementorum Directa et Inversa [Direct and Reverse Methods of Incrementation] (in Latin). London. p. 21–23 (Prop. VII, Thm. 3, Cor. 2). Translated into English in Struik, D. J. (1969). A Source Book in Mathematics 1200–1800. Cambridge, Massachusetts: Harvard University Press. pp. 329–332.

- ^Rudin, Walter (1980), Real and Complex Analysis, New Dehli: McGraw-Hill, p. 418, Exercise 13, ISBN0-07-099557-5

- ^Feller, William (1971), An introduction to probability theory and its applications, Volume 2 (3rd ed.), Wiley, pp. 230–232.

- ^Hille, Einar; Phillips, Ralph S. (1957), Functional analysis and semi-groups, AMS Colloquium Publications, 31, American Mathematical Society, pp. 300–327.

- ^Feller, William (1970). An introduction to probability theory and its applications. 2 (3 ed.). p. 231.

- ^Most of these can be found in (Abramowitz & Stegun 1970).

- ^Lars Hörmander (1990), The analysis of partial differential operators, volume 1, Springer, Eqq. 1.1.7 and 1.1.7′

- ^Duistermaat; Kolk (2010), Distributions: Theory and applications, Birkhauser, ch. 6

References[edit]

- Abramowitz, Milton; Stegun, Irene A. (1970), Handbook of Mathematical Functions with Formulas, Graphs, and Mathematical Tables, New York: Dover Publications, Ninth printing

- Thomas, George B., Jr.; Finney, Ross L. (1996), Calculus and Analytic Geometry (9th ed.), Addison Wesley, ISBN0-201-53174-7

- Greenberg, Michael (1998), Advanced Engineering Mathematics (2nd ed.), Prentice Hall, ISBN0-13-321431-1

External links[edit]

- Hazewinkel, Michiel, ed. (2001) [1994], 'Taylor series', Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers, ISBN978-1-55608-010-4

- Weisstein, Eric W.'Taylor Series'. MathWorld.

- Taylor polynomial - practical introduction

- 'Discussion of the Parker-Sochacki Method'

- Another Taylor visualisation — where you can choose the point of the approximation and the number of derivatives

- Taylor series revisited for numerical methods at Numerical Methods for the STEM Undergraduate

- 'Essence of Calculus: Taylor series' – via YouTube.

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Taylor_series&oldid=902269410'

When I use Maxima to calculate the Taylor series:

Basically I want to define a function as a expansion of

nbro(x+y)^3, which takes in x,y as parameter. How can I achieve this?6,0451010 gold badges5353 silver badges104104 bronze badges

gongzhitaaogongzhitaao4,87122 gold badges2929 silver badges3838 bronze badges

1 Answer

slitvinovslitvinov

Got a question that you can’t ask on public Stack Overflow? Learn more about sharing private information with Stack Overflow for Teams.

Not the answer you're looking for? Browse other questions tagged maximataylor-series or ask your own question.

Description

mtaylor(f, [x = x0, y = y0, ..]) computesthe first terms of the multivariate Taylor series of f withrespect to the variables x, y etc. around the points x= x0, y = y0 etc.With the default mode

RelativeOrder, thenumber of requested terms for the expansion is determined by order ifspecified. If no order is specified, the value of the environmentvariable ORDER isused. You can change the default value 6 byassigning a new value to ORDER.The terms are counted from the lowest total degree on for finiteexpansion points, and from the highest total degree term on for expansionsaround infinity.

If

AbsoluteOrder is specified, order representsthe truncation order of the series, i.e., no terms of total degree order orhigher are computed.For infinite expansion points, the absolute values of the exponentsof the corresponding variables are used to compute the total degree.

For finite expansion points

x0, y0, ..,the computed series with respect to the variables x, y, .. ofweight w1, w2, .. istaylor(f(x0 + t^w1*(x - x0), y0 + t^w2*(y - y0), dots),t = 0),evaluated at the point t =1.